Healthcare AI Scribes Need More Than Good Intentions

A recent CBC story, “AI scribe system hallucinations raise concerns among Ontario doctors,” has crystallized something many in health care and government have been sensing for months: the push to reduce documentation burden with AI is moving faster than the governance needed to keep patients, clinicians, and institutions safe.

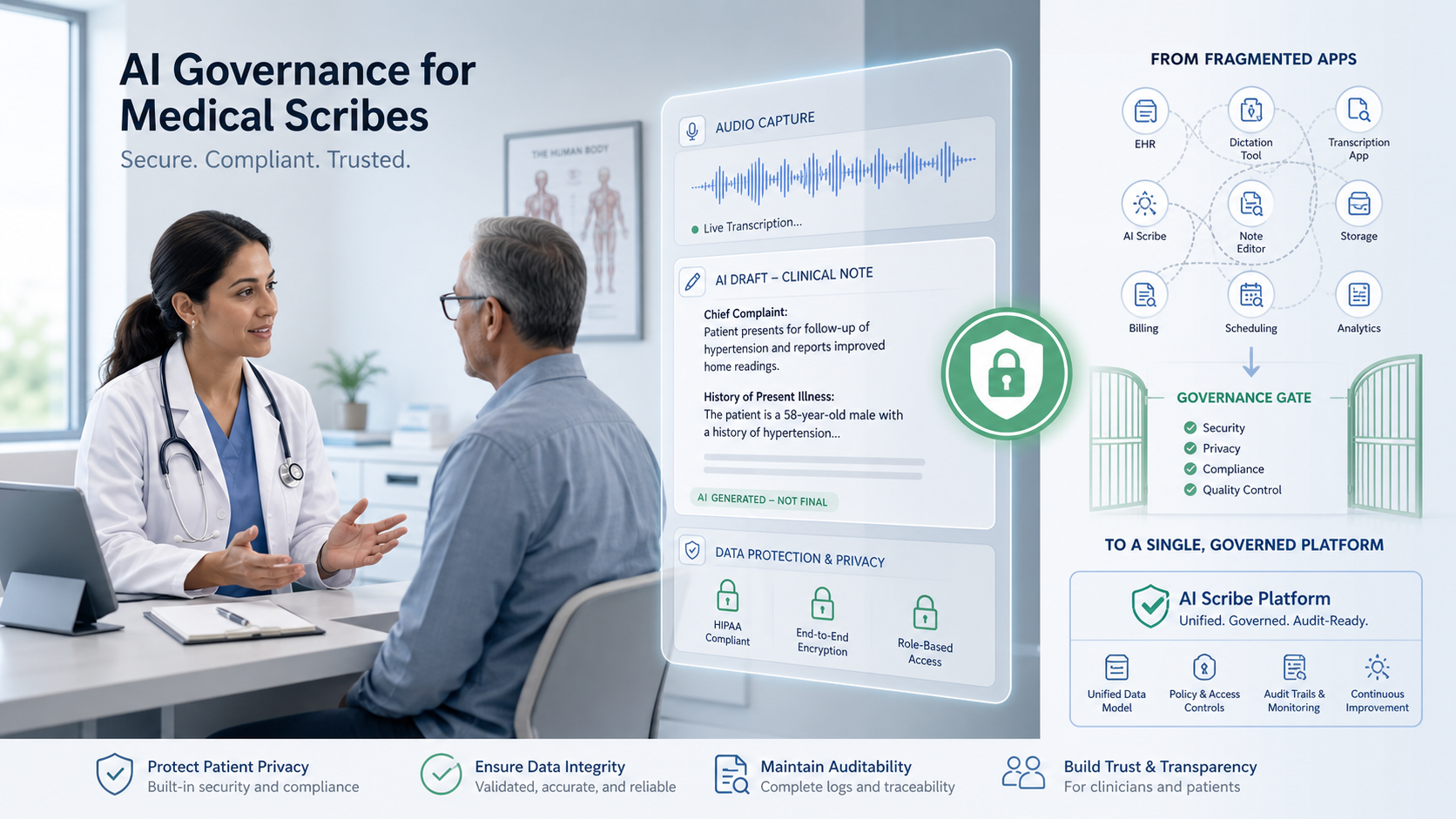

The story matters because it points to a larger structural problem. AI scribes are often treated as point tools that save time for clinicians, but the real issue is not the tool in isolation. The real issue is the absence of a governing operating model for how these tools are introduced, supervised, measured, and constrained in regulated environments.

The problem is not just the scribe

The conversation around AI scribes tends to focus on whether a particular product is accurate, fast, or easy to deploy. That is too narrow. When an AI system is drafting or editing the medical record, the questions must include: Who is accountable? How is consent handled? Where does the data go? What happens when the system makes a serious error? How quickly can we see, explain, and remediate that error?

Seen through that lens, the real problem is not “should health systems use AI scribes?” The real problem is that many organizations still lack an executive mechanism for governing AI systems across the full lifecycle—from procurement and policy, through day‑to‑day operations, to audit, incident response, and retirement.

That gap is not unique to health care. It is appearing across government and other regulated sectors wherever AI is moving from pilot to production.

VectoredValue’s view

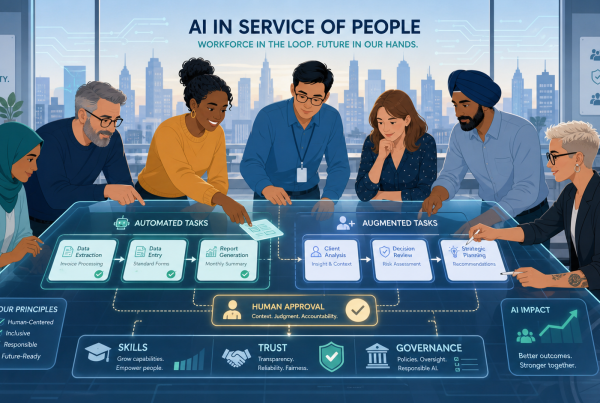

VectoredValue’s perspective is that this is fundamentally a CAIO problem, not just a product problem. A health organization may purchase a scribe application, but what it actually needs is a way to govern AI as an operating function.

That is why CAIO as a Service is the more relevant industry framework. It gives organizations a way to establish executive accountability, decision rights, risk thresholds, procurement standards, and evidence requirements before AI tools scale into clinical and administrative workflows.

The delivery layer for that operating model is an AI Fabric Lab: an industry‑specific environment designed to standardize how AI tools plug into policy, telemetry, controls, and auditability without forcing every organization to reinvent the governance stack from scratch. In health care, the Fabric Lab becomes the place where scribe systems are tested, instrumented, and governed before and during deployment.

What this public response is saying now

VectoredValue did not work with the organization in the CBC story and is not claiming any involvement in that specific deployment. This is a public response intended to sharpen the market’s understanding of what the story is actually revealing.

What it reveals is this:

-

AI scribe adoption is becoming a governance issue before it becomes a mature operational capability.

-

Privacy, consent, oversight, and vendor accountability cannot be managed as downstream compliance tasks. They must be designed into the operating model from the start.

-

The sector now needs a practical way to stand up CAIO‑level governance without waiting years to build that capacity internally.

That is the opening for CAIO as a Service and AI Fabric Labs: not as abstract consulting language, but as the missing delivery framework between public concern and safe implementation.

What can be done now

For government agencies, health systems, and sector leaders, the next step is not to pause innovation. It is to stop treating AI scribes as isolated apps and start treating them as governed AI services that require an operating model.

A practical starting point could include:

-

Defining clear executive accountability for AI scribes and related clinical AI tools (a CAIO mandate, even if fractional at first).

-

Requiring vendor transparency on data handling, model behavior, monitoring, and incident support as a condition of procurement and renewal.

-

Establishing a fabric‑based governance layer—an AI Fabric Lab—that can enforce policy, capture evidence, and support meaningful human oversight across tools and workflows.

The CBC article is valuable because it surfaces the warning signs early. The opportunity now is to respond with better architecture for adoption: CAIO as a Service as the governance framework, and healthcare AI Fabric Labs as the delivery model that helps organizations implement AI scribes responsibly, at scale.

Reference article: “AI scribe system hallucinations raise concerns among Ontario doctors,” CBC News

https://www.cbc.ca/news/canada/toronto/ai-scribe-system-hallucinations-9.7197049